The High Cost of Going Cheaper Samples

Trying to do things on the back of cheaper samples can come at a high cost to your business. Here’s why…

Advances in technology have made conducting surveys cheaper and more efficient, as it is now easier to find people who are willing to answer a survey. Survey can be conducted by posting polls on social media sites such as Facebook or Twitter, or you can disseminate google form links through email or messaging services such as Whatsapp and even post those links on the aforementioned social media sites to get responses – cheap and readily available sample. Or even use some cheaper sample purchasing platforms. These are all cheaper and seemingly effective ways of getting respondents, but how can you be sure that the people answering your survey are actually representative of the population or target audience that you are going for?

What happens if the data that you have gathered is completely wrong and you don’t realise that when using that data to make an important business decision? If you consider the overall cost of the bad decision, then you’ll come to realise the real HIGH cost of going cheap.

In this article we will consider the reliability and accuracy of conducting surveys through digital media. Conducting surveys through social media polls and google forms sounds reasonable enough as an information source, but research shows that the sample source can turn out to be completely unreliable, as results varied wildly for the same question, turning out to be grossly incorrect compared to results for the same question produced by a different research methodology.

Here is an example of how cheap sample can lead to misinformation.

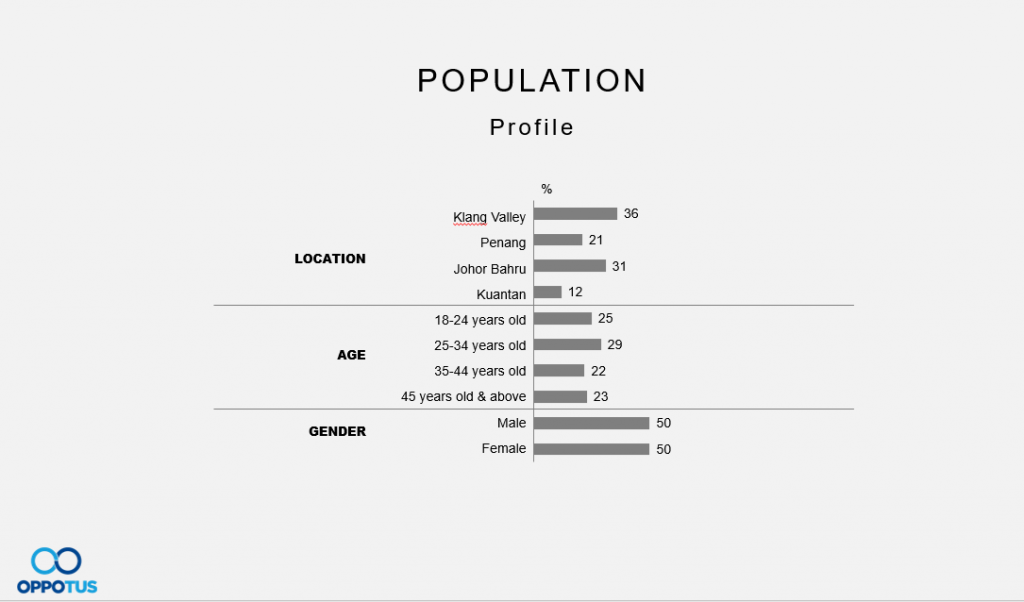

Let’s say that this is the general population distribution based on the current market.

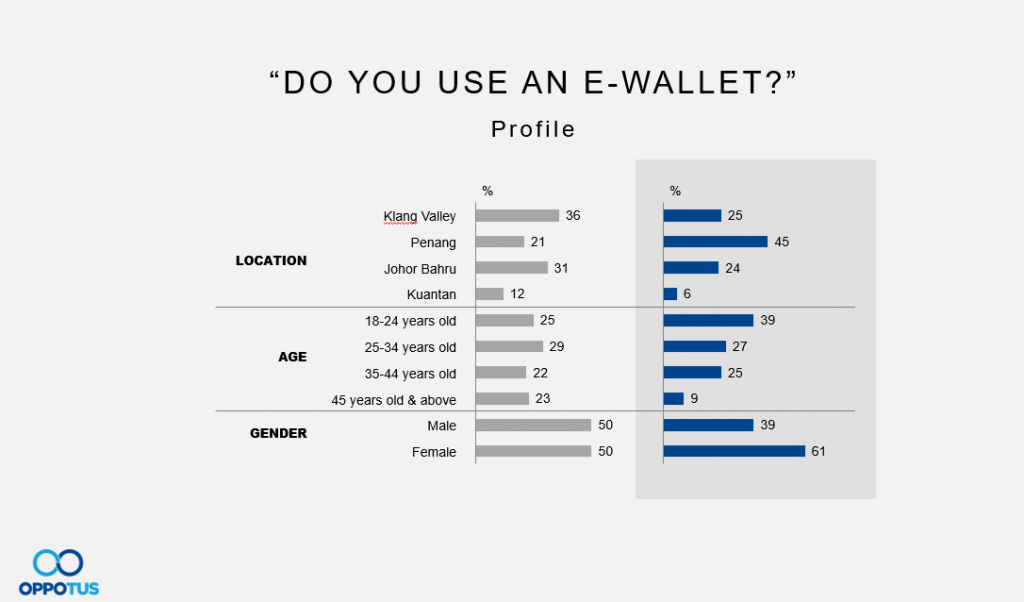

When we ran a survey through polls on social media platforms about e-wallet usage, the resulting data suggested that Penang is a hot-bed for e-wallet users, especially amongst the younger market out there (18-24 year olds). The data also presented females as generally being a heavy adopter of the technology.

These results would probably surprise quite a lot of people who are using the various e-wallet services, as it also suggests that there is a comparatively lower number of e-wallet users in the Klang Valley – where the capital of Malaysia is.

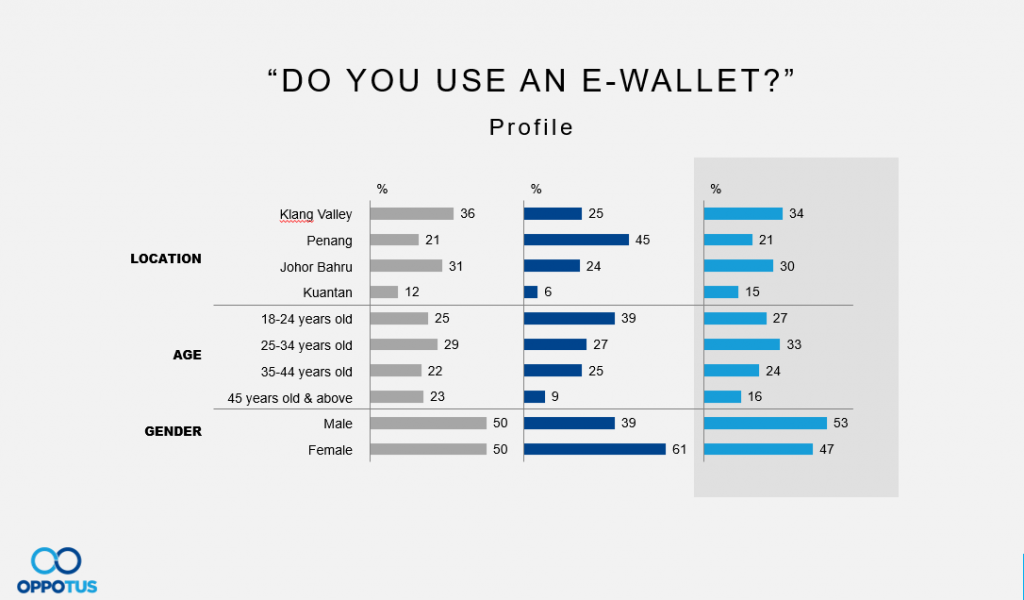

Thankfully, we have been tracking data on e-wallets for some time now in a systemic way amongst Malaysians on the ground, giving us some much more reliable figures.

The data definitely paints a very different picture to the one we saw earlier. Not only do we see that the majority of usage is very much firmly in Klang Valley mirroring population distribution, it’s particularly strong amongst young male (working) adults.

This would certainly change how you would approach or plan out your customer acquisition strategy compared to before! Using cheap sample can be very dangerous, considering you would be potentially wasting time or resources going on a wrong path.

So how did we manage to get such wrong numbers?

Here are some of the possible reasons why the numbers of a survey could end up being wrong:

- The sampling was done with an unrepresentative set of people. The risk of this is high if all respondents came from a single source e.g. people who initially saw the polls were primarily from Penang and it was shared mostly among their friends and family who lived in the same location. When conducting surveys, depending on our target, we generally need to ensure a good spread so that this kind of bias is at least somewhat minimised.

- The data is weighted incorrectly because of errors in the imputation methods used to estimate the demographics. The fact that demographics are usually estimated rather than being measured directly is problematic and can understandably lead to errors. This is especially the case when certain alternative approaches of recording demographic profile are being used. This then leads us to consider our next point.

- The people answering the survey actually didn’t bother to answer correctly because they don’t care about the results. It’s just as possible that all of these reasons played a part in the data being wrong.

There’s been considerable research done on the subject of why people respond to surveys.

One reason is that people mainly do surveys when they get something material in return – such as money or access to certain content. Another reason could be because some people find value in doing surveys as it lets their voice be heard and they have the opportunity to contribute to a cause or community.

A psychologist named Anja Göritz found that material incentives increase the likelihood that people will respond to a survey, but the overall impact is actually quite small as it only accounts for a 3-5% increase in the overall response rate.

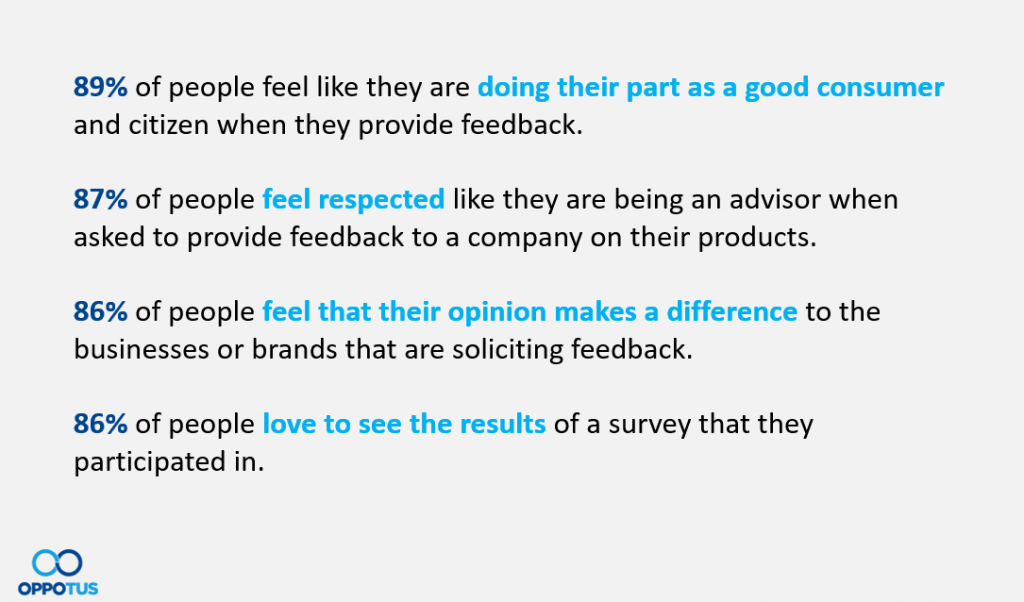

Anja Göritz also reminds us that using material incentives is just one option to influence the quality and quantity of data. We can also use other methods to enhance responses such as personalised surveys, pre-notification, reminders, and appealing to the altruistic side of people when asking them to contribute to studies. In studies by Oppotus it was found that intrinsic motivations tend to have more impact on respondents then extrinsic ones, which highlights the value of having engaged respondents for your surveys. The results of their study showed the following:

All of these intrinsic motivations are inspired by the desire to contribute as a member of the community, rather than just being an individual doing a survey to get money or access to content.

So why is data sourced from informal internet surveys sometimes inaccurate and unreliable?

In those cases, we know that the respondents are not being sourced in a way that ensures that we are getting a sample that is representative of the population/audience that we are studying.

Technology has certainly enabled a lot of capabilities and it’s a good trend to see people are more aware of the need and purpose of research and feedback in the applications to business.

Attempting to share and disseminate a survey through multiple digital platforms is really easy now and allows us to potentially reach a vast amount of people, but when respondents are brought to the survey in this manner, it becomes increasingly unlikely that the respondents will provide data which is representative of the population, as we cannot control who is answering the surveys and the manner in which the surveys are being answered.

Conclusion

Not all sample is the same. We must not forget that the “sample” we are dealing with is actually a group of human beings who want to be engaged, respected, and to know that they are making a difference.

When you come across a sample source that seems remarkably inexpensive, you need to ask questions about how the sample is sourced, the quality and accuracy and as well the motivations of the respondents.

With all of that being said, now is the time to think again about the cost of getting incorrect data and making the wrong decision as a result of that. The cost of cheap sample is high.

If you would like to find out more about how we can help you get more reliable and accurate survey results to guide your business decisions, do reach out to us to start a conversation.